-

If you want a single-player Lethal Company, don't miss the developer's previous It Steals

Pac-Man meets Slender Man

-

I followed this ox around in Manor Lords for a day to see what wisdom it could teach me

Quite a lot, it turns out

-

Review: Phantom Fury review: a retro shooter obsessed with inconsequential do-hickeys

No arm in trying

-

Stay awhile and play this free Diablo-inspired indie as the mayor of Tristram

The home of the official unofficial best thing in games

-

Counter-Strike 2 finally gets left-handed view models, seven months after launch

Valve reinstates a CS:GO staple

-

Even if we never get Stellar Blade on PC, action-RPG Aikode looks comparably swish

Another student of the school of Nier: Automata

-

I tried to recreate Stray in Lethal Company, with disastrous results

You know what they say about curiosity and the cat

-

Review: Another Crab's Treasure review: a playful Soulslike for everyone, especially if you like crabs

This will more than tide you over

-

The Maw - 22nd-27th April 2024

This week's least outwardly offputting game releases, plus our weekly newsblog

Live

Psst! Explore our new "For you" section and get personalised recommendations about what to read.

-

Upgrade your Steam Deck storage with Corsair's MP600 Mini 1TB SSD for just £70

Pair one of the best M.2 2230 SSDs with your Steam Deck for a healthy storage boost.

-

£25 for a pair of speakers sounds great to us

These Creative Pebble V3 speakers are a great choice for rich, clear audio on a budget.

-

Get the fastest PCIe 4.0 SSD for $139 at Amazon

Save over $50 on the Crucial T500.

-

You can get a WD Black SN850X 2TB SSD for as little as £112 but they're selling fast

Save up to £28 on this speedy SSD using the eBay app and a special discount code.

-

It’s gone down as you’d expect

-

The survival-horror game arrived in March after multiple delays

-

Its puzzle-platforming is even built on some of Celeste’s open-source code

-

Developer, meanwhile, is planning to rebalance the archers

-

Even if we never get Stellar Blade on PC, action-RPG Aikode looks comparably swish

Another student of the school of Nier: Automata

-

Stay awhile and play this free Diablo-inspired indie as the mayor of Tristram

The home of the official unofficial best thing in games

-

Counter-Strike 2 finally gets left-handed view models, seven months after launch

Valve reinstates a CS:GO staple

-

You can now feed people to the big beast in The Wandering Village

A snack on the back

-

New Escape From Tarkov PvE mode exclusive to pricey Unheard edition despite promises of free DLC

Community manager insists mode doesn't qualify as DLC

-

Supporters only: Unpacking the cursed digital object that is Steam’s clown reaction emoji

But doctor, I am mostly positive!

I don’t want to strike sweaty terror into anyone’s gentle hearts here, but I’m beginning to suspect lately that the friendly clown emojis I keep seeing as reactions to Steam user reviews aren’t actually a colorful kudos to the writer for being a chucklesome and whimsical individual. I’m starting to fear, actually, that this one icon of a behatted japester may have been widely adopted …

-

Supporters only: Lethal Company is as much about protecting the mundane, as it is a horror game

They should put my new coffee grinder in there

-

Supporters only: Watching Civil War made me want more games with black and white stances on morality

I can't think of any good modern examples!

-

Supporters only: Piecing It Together gave me a timely little island of calm

Good thing I got my pieces

Get your first month for £1 (normally £3.99) when you buy a Standard Rock Paper Shotgun subscription. Enjoy ad-free browsing, our monthly letter from the editor, and discounts on RPS merch. Your support helps us create more great writing about PC games.

See more information-

Review: Phantom Fury review: a retro shooter obsessed with inconsequential do-hickeys

No arm in trying

-

Review: Another Crab's Treasure review: a playful Soulslike for everyone, especially if you like crabs

This will more than tide you over

-

Review: Sand Land review: a boring Mad Max lite that should have been very exciting

More like Bland Land, am I right?

-

The best farming games like Stardew Valley on PC

The cream of the crop

-

A very puzzling collection

-

The 15 best open world games on PC

Keep your options open

-

Review: Botany Manor review: peaceful and beautiful best-in-show plant puzzles

Come away, O human child! To the waters and the wild

-

The best microSD cards for the Steam Deck

Expand your Steam Deck’s storage with these tried-and-tested cards

-

Should You Bother With... Hall effect keyboards?

Heroes of might and magnets

-

Arise now, ye Cerim: No Rest for the Wicked’s performance updates are underway

Already running better on low-end GPUs

-

No Rest for the Wicked’s PC performance suggests the wicked might be better off waiting

Got them early access growing pains

-

Stay awhile and play this free Diablo-inspired indie as the mayor of Tristram

The home of the official unofficial best thing in games

-

Gorgeous interactive fiction Pine: A Story Of Loss is a small sad game about a big sad man

Chopping wood, cutting onions

-

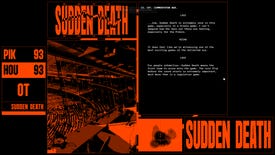

SUDDEN DEATH is a free slice of interactive fiction about love, drugs, and Australian football

some of it is also about chips

-

It’s gone down as you’d expect

-

The survival-horror game arrived in March after multiple delays

-

Its puzzle-platforming is even built on some of Celeste’s open-source code

-

Developer, meanwhile, is planning to rebalance the archers

-

Review: Phantom Fury review: a retro shooter obsessed with inconsequential do-hickeys

No arm in trying

-

Deals: Upgrade your Steam Deck storage with Corsair's MP600 Mini 1TB SSD for just £70

Pair one of the best M.2 2230 SSDs with your Steam Deck for a healthy storage boost.

-

I followed this ox around in Manor Lords for a day to see what wisdom it could teach me

Quite a lot, it turns out

-

Even if we never get Stellar Blade on PC, action-RPG Aikode looks comparably swish

Another student of the school of Nier: Automata

-

Supporters only: Unpacking the cursed digital object that is Steam’s clown reaction emoji

But doctor, I am mostly positive!

-

How to make weapons and armor in Manor Lords

Here's how to construct a good supply of weapons and armor in Manor Lords

-

How to get started with Manor Lords

-

How to increase Approval in Manor Lords

Here's how to keep your Approval level high in Manor Lords

-

How to build the Manor in Manor Lords

Here's how to construct the titular Manor in Manor Lords

-

King Legacy codes list [April 2024]

Codes you can redeem in King Legacy for free Beli, Gems, and more!

-

Anime Fighters Simulator codes

Nab yourself a variety of boosts and other rewards with these codes

-

Redeem these Blade Ball codes for free Coins and free Spins

-

Honkai Star Rail codes (April 2024)

Gain Stellar Jade and more rewards with these Honkai: Star Rail codes before they expire!

-

Manor Lords: How to build Market Stalls

How to set up a full Marketplace of Stalls in Manor Lords

-

Manor Lords: Best Development Upgrades

Prioritise these Development Upgrades for the best possible start in Manor Lords